Deep Dive #2: The Right Way to Think About "Generative" AI

In our second installation of our Deep Dive series, we take a closer look at the hype around "generative" AI and argue that investors may not be thinking about opportunities in GenAI in the right way.

By Sam Musker, Kelly Yang, and Cécile Tang

Overview of Generative AI

Generative AI has rapidly become one of the most closely watched technologies for investors. The sheer size of certain investments is staggering, especially in a market of depressed investment in new technologies (for example, Microsoft’s $10 billion investment in OpenAI is large enough to be listed as its own line item in Bain’s global breakdown of venture capital investment by geography).1 Clearly, investors believe that the technology is likely to be a major source of value creation.

Earlier generative AI systems were more commonly either language models (such as GPT-3) or image generators (such as DALL-E 2), although models in other and mixed modalities are becoming more prevalent (such as the auditory-language model Whisper and the vision-language model GPT-4).

The “hype” around generative AI is, in our view, well-justified. These models have advanced the state of the art in AI significantly, and are already being deployed in numerous applications, driving meaningful revenue growth and cost savings in a variety of industries.2

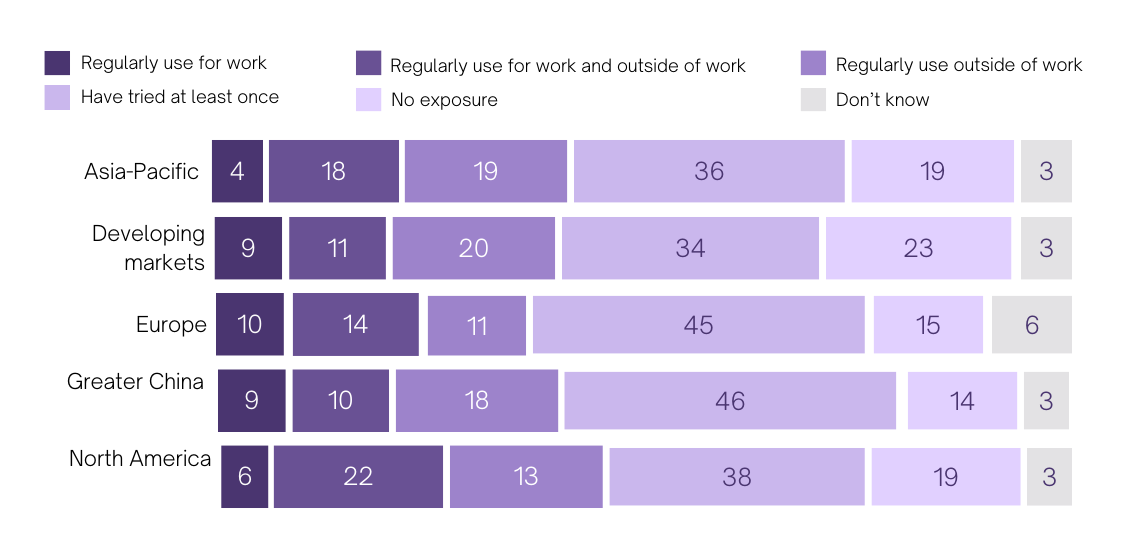

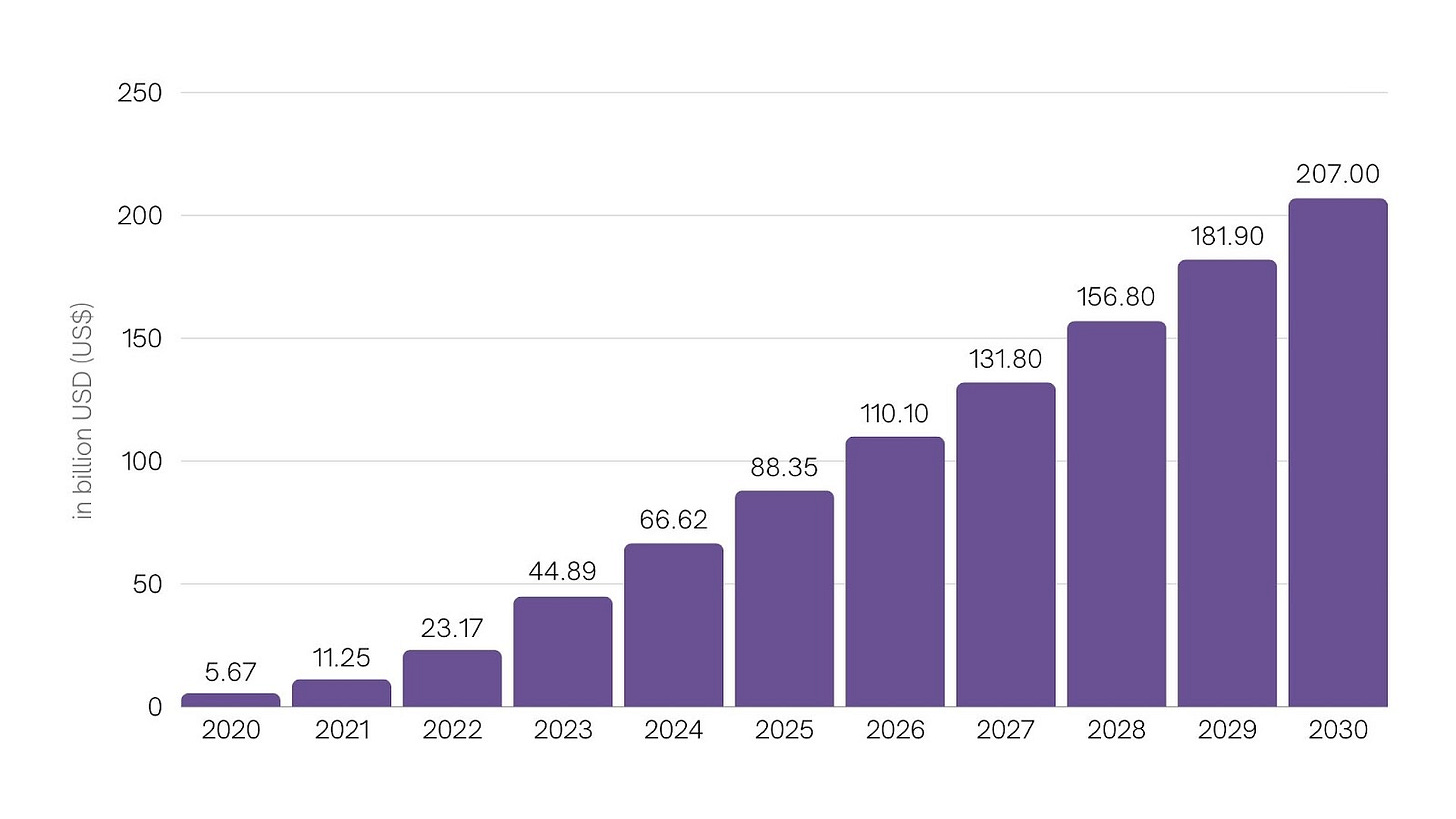

Over 5% of workers across geographies now report using generative AI regularly for work, and Statista Market Insights projects a market exceeding $200B by 2030 (see Figures 1 and 2 below).

Figure 1: Generative AI usage by geography.3

Figure 2: Generative AI projected market size.4

The projection given in Figure 2 is, in our view, overly conservative. Rather, we think that investors should expect a generative AI market of several trillion dollars by 2030.

To support this claim, let us look at the relative valuations of Microsoft and Alphabet as an indicator of the value that the market assigns to an increased probability of dominating future generative AI revenues. Based on data from the Wall Street Journal, Alphabet is trading at a PE ratio of 26, only slightly above the S&P 500 weighted average of 23. By contrast, investor enthusiasm is supporting a 35% premium on Microsoft stock, with a PE ratio of 35. A similar premium remains when comparing forward PE ratios, indicating that the difference is more likely a reflection of long-run investor sentiment than of a material difference in near-term profit projections. Much of the positive sentiment towards Microsoft can be attributed to investor perceptions of its increased likelihood to succeed in future generative AI markets, as a result of its current highly successful relationship with OpenAI.5 Conversely, Alphabet’s pricing by the market is hurt by fears that information provided directly by LLMs in response to user queries could eat into search, the core of its business.6

This 35% stock price premium is worth approximately $700bn of additional market capitalization for Microsoft. Importantly, this value is not solely attributable to generative AI, and it does not represent investors pricing in a 100% probability of Microsoft cornering 100% of the generative AI market, with Alphabet taking home nothing from it. Assume for the sake of argument that 70% of this premium is due to generative AI projections, and that it prices in a 33% chance of Microsoft taking home 2x more of the generative AI market than Alphabet, a 33% chance of them taking home the same portion, and a 33% of them each taking home nothing (plenty other strong players exist in the space, and moats may not be large7). Assume that in the first two cases, the two companies take home half of the generative AI market between them. These assumptions put the net present value of the generative AI market at a little over $5tn. If we further assume that this represents the net present value of 20 years of generative AI profits, which we assume to increase linearly from 0 over that period with a 7% discount rate, then this places the profit in 2030 from generative AI at $350bn, consistent with a 2030 generative AI market size of several trillion dollars. To summarize the argument: if the premium the market is willing to pay for Microsoft over Alphabet is largely due to an increased likelihood of the former dominating in generative AI, then the market consensus on the total revenues from generative AI in 2030 must be in the trillions to justify the current magnitude of the premium that investors are willing to pay for Microsoft over Alphabet.

These numbers help to make sense of the scale of Sam Altman’s recent efforts to raise $5-7tn to enhance chip production capacity in order to support the growth of generative AI.8 Given that such investments in chip infrastructure would not be solely for the purposes of fueling the generative AI market, this scale of investment may not be as unreasonable as it might appear. If the 2030 size of the generative AI market were instead just $200B, as modeled by Statista Market Insights, then this scale of investment would be patently misguided.

How should investors think about where the biggest opportunities lie for this exciting technology that could underlie a new multi-trillion-dollar industry?

How the Mass Media and Some Investors Misunderstand the Technology

Over-indexing on the “generative” angle implied by the generative AI moniker may mislead investors as to the nature of the technology and where its largest economic opportunities lie. “Generative” AI is evocative of “creative” or “productive” AI, and indeed early public and investor attention has focused on creative and productive applications: using Large Language Models to write media content, using generative image models to create synthetic art and design, and the like.

While generative AI certainly unlocks exciting business opportunities in creative and productive applications, it is fairly accidental that the term “generative AI” aligns with these early use cases. Generative AI derives its name largely from the earlier term “generative model,” which itself is often used as a contrast with the term “discriminative model.”

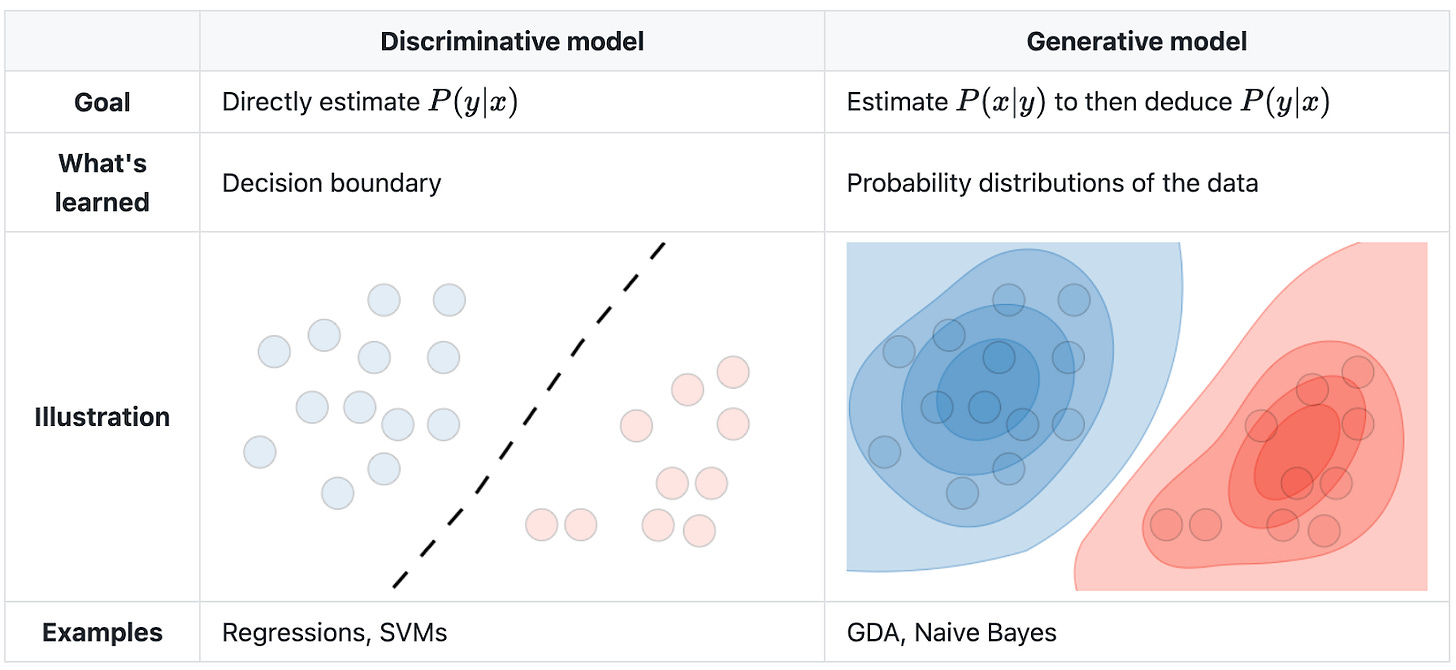

These technical terms have obscure mathematical underpinnings that have little to do with commonsense notions of what such models might be good for. For example, Jebara characterizes generative models, as opposed to discriminative models, as follows: “Generative [...] approaches produce a probability density model over all variables in a system and manipulate it to compute classification and regression functions. Discriminative approaches provide a direct attempt to compute the input-output mappings for classification and regression.”9 This difference is outlined in Figure 3 below.

Figure 3: A high level schematic of discriminative versus generative models.10

Importantly for investors, the upshot is this: the “generative” in generative AI is best interpreted as a training trick for coaxing a deep neural network into effectively representing a target domain. Once a network has been trained to effectively represent its domain, the ways in which it can be utilized are highly flexible. That the recent wave of progress in AI has occurred under the banner of generative AI should tell investors approximately nothing about application areas involving the generation of content being more apt for disruption than application areas that do not straightforwardly involve it.

Indeed, given that the vast majority of economically valuable activity does not straightforwardly involve the generation of novel content, investors should explicitly assume that such areas will not be the main applications of generative AI.

Generative AI Doesn’t Mean “Generative” Applications

The best examples available to illustrate the point come from the last few years of AI research. Some highlights follow, with each representing an example of using a generatively trained model in an application domain that is not “generative” in the colloquial sense.

Perhaps closest to home for investors is a trading algorithm, produced by pretraining a generative network on historical price data and public Tweets before using the network as the basis for a stock-buying agent.11 More generally, the broad problem areas of classification, planning, and control have all witnessed significant progress from the redeployment of generatively pretrained networks. In the problem domain of classification, generatively pretrained models are well-represented in the leaderboard for ImageNet classification,12 supporting applications such as the use of a generative vision-language model to flag harmful memes online.13 Generatively trained models have also been utilized in the domain of planning, in which an agent must decide the best course of action given available information,141516 and in the related domain of robotic control.17 Given that most economically valuable tasks can be reformulated as some combination of content generation, classification, planning, and control, investors would be well advised not to assume that generative models will be primarily “generative” in deployment in the common sense of the word.

How Misunderstanding Generative AI Can Lead Investors Astray

If it is likely that investors are currently over-focused on application domains for generative AI that are generative in the colloquial sense, then what tangible implications would this have?

One specific suggestion is that Adobe may be overvalued. Generative AI does not feature in the company’s Q3 2022 earnings call,18 but is prominent from the Q4 2022 earnings call19 onwards. Correspondingly, its stock price has almost doubled since September 2022, reaching a P/E ratio of 45 at a premium of 45% relative to a comparison class ratio of 31 in the S&P 500 Equal Weight Technology Index. If generative AI is best deployed in content creation applications, then Adobe is a well-placed company to reap the rewards. But if generative AI’s long-run application domains will not be closely tied to applications that are colloquially generative, then this price increase may not be sustainable and the stock may still be overvalued despite a recent pullback. In the private markets, parallel justifications have been given for Canva’s valuation multiples being even loftier than Adobe’s also based partly on a generative AI angle.20 One might argue that this similarly reflects a misjudgement of where the technology will have an outsized impact.

The Right Way to Think About Opportunities in Generative AI

More generally, if thinking in terms of applications that are colloquially generative is not a good guide for thinking about where opportunities lie in generative AI, then what is? Crucially, generative AI succeeds in domains in which enormous amounts of data are available, and fails when data is more scarce. Unlike earlier approaches, however, new models are better able to repurpose pretraining on a large amount of related data before fine-tuning on more specific data from the task at hand (for intuition, think of a large language model pretraining on internet-scale text before being finetuned to grade algebra homework). A better heuristic for identifying opportunities in generative AI might then be inspired by the other buzzword rapidly entering the public discourse: foundation models, or large models that are pretrained on a broad dataset before being finetuned for a specific use case. The largest economic opportunities in generative AI, then, may lie not in application domains which are colloquially generative in nature, but rather in opportunities where models pretrained on data of an enormous scale can be finetuned for a specific use case.

A recent cohort of startups is already repurposing generative models for these applications. Three of note are Figure, Waabi, and Oscilar. Figure, which raised $675 million at a $2.6 billion valuation, has partnered with OpenAI and aims to enable intelligent humanoid robots using an end-to-end deep learning approach. Waabi has raised over $83 million and similarly aims to take generative AI into the real world with its foundation model for self-driving cars.21 But deploying generative models in non-generative applications isn’t limited to physical-world applications. Oscilar, self-funded with $20 million, aims to bring generative models to credit risk assessment and fraud detection.

Concluding Thoughts

In short, because generative models amount to a technique for modeling the underlying distribution of any domain in which sufficient data exist, the application areas are relatively unconstrained. As such, the primary opportunities should be expected to mirror the largest slices of the economic pie for which good data can be obtained. Investors, therefore, shouldn’t expect the biggest opportunities in generative AI to lie in text and image generation. The multi-trillion-dollar question of what bigger opportunities remain unexplored is left as an exercise for the reader.

We have compiled a more detailed discussion of our key arguments and insights in the PDF below:

VWV is perpetually learning about the GenAI space — if you have any thoughts on our deep dive or are building in this space, feel free to reach out to us at vwv@brown.edu to compare notes. 💡